Transformations rarely fail because a single feature breaks. They fail because small uncertainties accumulate until nobody can confidently say the end-to-end business will hold together at go-live.

Quality Assurance (QA) exists to stop that drift. And while the industry is currently obsessed with using AI to generate test artifacts, its true power lies in strengthening clarity, consistency, and decision-making.

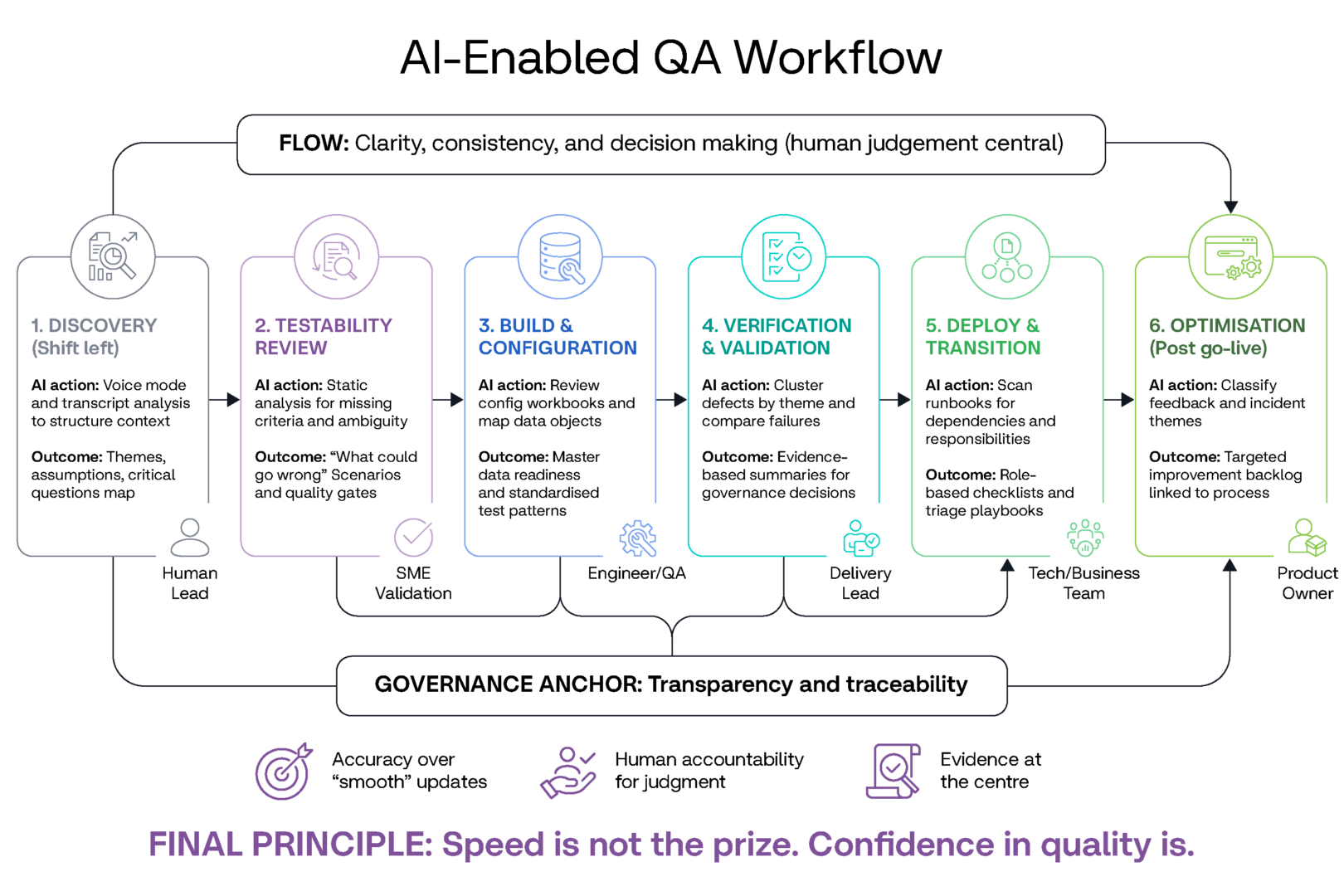

When your programme is under pressure, your test strategy must stand up in governance. Your coverage model must trace back to business outcomes. Your exit calls must be defensible. You do not achieve that with general prompts. You achieve it through the intentional and targeted application of AI across the transformation lifecycle, with humans firmly owning the judgement and accountability.

LLM responses lose value when used generically; in a transformation context, AI requires a strategic, targeted application to be effective. Based on my experience delivering high-stakes transformation programmes, here is how I practically leverage AI across the QA lifecycle.

Discovery phase

In business transformation, there are many moments (in planning, execution, and decision-making) when quality is either built in or quietly compromised. The early stages are where the most value is created (i.e. we all know the famous “shift left”), because ambiguity is most cost-effective to resolve before it becomes configuration, code, data transformations/migrations, and training content. This is the first place AI earns its keep. In QA discovery for a large transformation, I use voice mode on an LLM to offload everything I know about the programme. I talk through the scope, the moving parts, the integrations, where the business is likely to feel pain, what “good” must look like in production and my past experience on similar transformation programmes. I then upload relevant artifacts, transcripts of interviews, discussions and online meetings I’ve had with programme leads, business stakeholders, vendors, etc. Finally, I prompt the model to organise it into themes, risks, dependencies, assumptions, and questions that must be answered.

The output is a sharper starting point that helps me lead the right QA conversations early in discovery. This enables me to have productive interactions on risk, better articulating appetite versus consequences, what success looks like and flows well into developing the Test Strategy and subsequent Planning artifacts.

Design phase

In solution design, I use AI as a testability reviewer. Transformation requirements are often incomplete, and typically (at this stage) are written in a high-level manner, which is meaningful to the business but not detailed or technical enough to be testable in practice. AI can quickly help deliver high-value static analysis to highlight missing acceptance criteria, ambiguous business rules, and steps in a process that have no observable outcome. It can also propose the “what could go wrong?” conditions that teams forget when they are focused on the happy paths (note that the prompt structure is always important, but the timing of use and outputs expected is planned and intentional).

The key is that I treat AI like a high-speed second set of eyes. I validate these findings with subject matter experts and delivery leads, translating AI insights into concrete decisions regarding scope and quality gates.

Be prepared to throw out AI suggestions and spot the high-value suggestions that need urgent attention. This is where the professional QA “human-in-the-loop” is vital.

Build phase

When the build and configuration phase begins, many programmes accidentally create a testing bottleneck because QA has not been shifted left. A practical quality lever here is QA static analysis, so defects are caught before they leak into formal test cycles.

Another lever that is routinely underestimated is master data readiness. If your core reference data is not loaded early, every integration and business process test becomes artificial, and teams start debugging test data instead of validating the solution. In addition to setting up more efficient first drafts for general test analysis and design, I’ve used AI to support this stage to review configuration requirements for inconsistency, generate candidate negative tests for customised components, and help quality engineers standardise test patterns. It can also help QA teams map business process steps to data objects and highlight prerequisites for end-to-end testing (which are usually complex to determine).

Test execution phase

Verification and validation (test execution) is the phase where time pressure is highest and where discipline is essential. This should include disciplined application of QA strategy and planning, with any deviations always being tied to risk, application of mitigation and business acceptance of residual risk. We can reduce noise with strategic AI use. For example,

- cluster defects by theme and likely root cause, so delivery teams stop treating each defect as an isolated incident.

- compare repeated failures across environments and releases, which often reveals deeper data or integration issues.

- produce clear summaries for governance, not as polished storytelling, but as an accurate translation of evidence into decisions.

These ideas in QA management reporting are not revolutionary, but made easier to analyse and report on with well-structured prompts, context provision and sources.

When programmes are heading toward production, a few measures become particularly important. Data migration accuracy has to be high enough that the solution behaves correctly with real data, and end-to-end test pass rates need to reflect genuine stability rather than selective functional execution.

Maybe reword as: Severity one and two defects serve as critical decision points, often becoming flashpoints of contention as the implementation date nears. AI can help keep these vital data points visible in a consistent manner and help temper the human emotion we see in late-stage defect triage sessions.

Go-live phase

Deploy and transition is where transformation risk turns into business risk. Cutover plans usually include assumptions, and those assumptions are where most late surprises hide. AI works well here as a structured reviewer. I’ve used it to scan a cutover runbook and surface missing dependencies, unclear responsibilities, and steps that have no validation checks.

Run phase

After go-live, optimisation is what separates a successful switch-on from a successful transformation. Real user experience, incident patterns, and adoption behaviours are the feedback loop. AI can help classify and summarise themes from support tickets, user feedback, and operational data, then translate those themes into an improvement backlog that is tied back to business processes, which helps refine the regression test suite. Maintaining test traceability post-implementation and aiding in continuous prioritisation for regression.

Conclusion

Across all of this, governance is the anchor. Transformation programmes need clear accountability, clear phase gates, and clear readiness criteria. If governance becomes optimistic reporting, quality is lost long before production. AI can improve governance by strengthening transparency, consistency, and traceability. It makes things worse when it is used to accelerate delivery without a plan or to produce smooth updates that hide risk. In QA, trust comes from accuracy, not from confidence in wording.

If you want something practical to apply immediately, think in terms of an AI-enabled QA workflow that mirrors how you already deliver transformation confidence. Start by capturing and structuring context to align the team on outcomes and risks. Document it as best you can in your strategy to secure stakeholder buy-in.

Use AI to challenge requirements and processes for testability before accelerating the build. Push quality left by supporting QA analysis on solution design/requirements and data readiness. Use AI in validation to reduce noise and strengthen the evidence going into governance decisions. Use it in cutover planning to expose assumptions and tighten readiness. Use it post go-live to turn operational reality into targeted improvement.

The teams that get the most value from AI in transformation QA may not be the ones with the cleverest prompts. They will be the ones who design repeatable workflows that keep humans accountable for judgement, keep evidence at the centre, and keep quality visible at every decision point. AI can make QA faster, but speed is not the main prize. Confidence in quality is.