Next steps: Strategic Test Management

At Assurity Consulting, our experts are deeply experienced in leveraging a variety of industry-leading Test Management tools, with Xray for Jira being a prominent example in our toolkit. Our commitment, however, extends beyond proficiency in a single platform.

Our core approach is fundamentally tool-agnostic, meaning we prioritise the client’s unique context, goals, and technological ecosystem over allegiance to any specific vendor or solution. This philosophical stance allows us to effectively work across a diverse portfolio of clients, each with distinct needs, legacy systems, and compliance requirements supported by our Human-Centred Test practice.

We adopt a “fit for purpose” strategy for Test Management. This involves a rigorous initial assessment to understand the client’s business objectives, testing maturity, regulatory landscape, and current toolchain. Based on this analysis, we strategically select, configure, and implement, or pragmatically adapt to, the available Test Management solution – be it Xray, Zephyr, qTest, an existing in-house solution or another platform that is optimally suited to the task at hand.

The ultimate goal of this agnostic, purpose-driven approach is simple: to ensure we consistently meet and exceed the client’s defined purpose, achieve all key testing objectives (such as coverage, defect reduction, and time-to-market), and deliver measurable, sustainable outcomes that enhance the overall quality and reliability of their software products. Our expertise ensures that the chosen solution, including powerful tools like Xray, serves as an enabler for efficient, high-quality delivery, rather than a constraint.

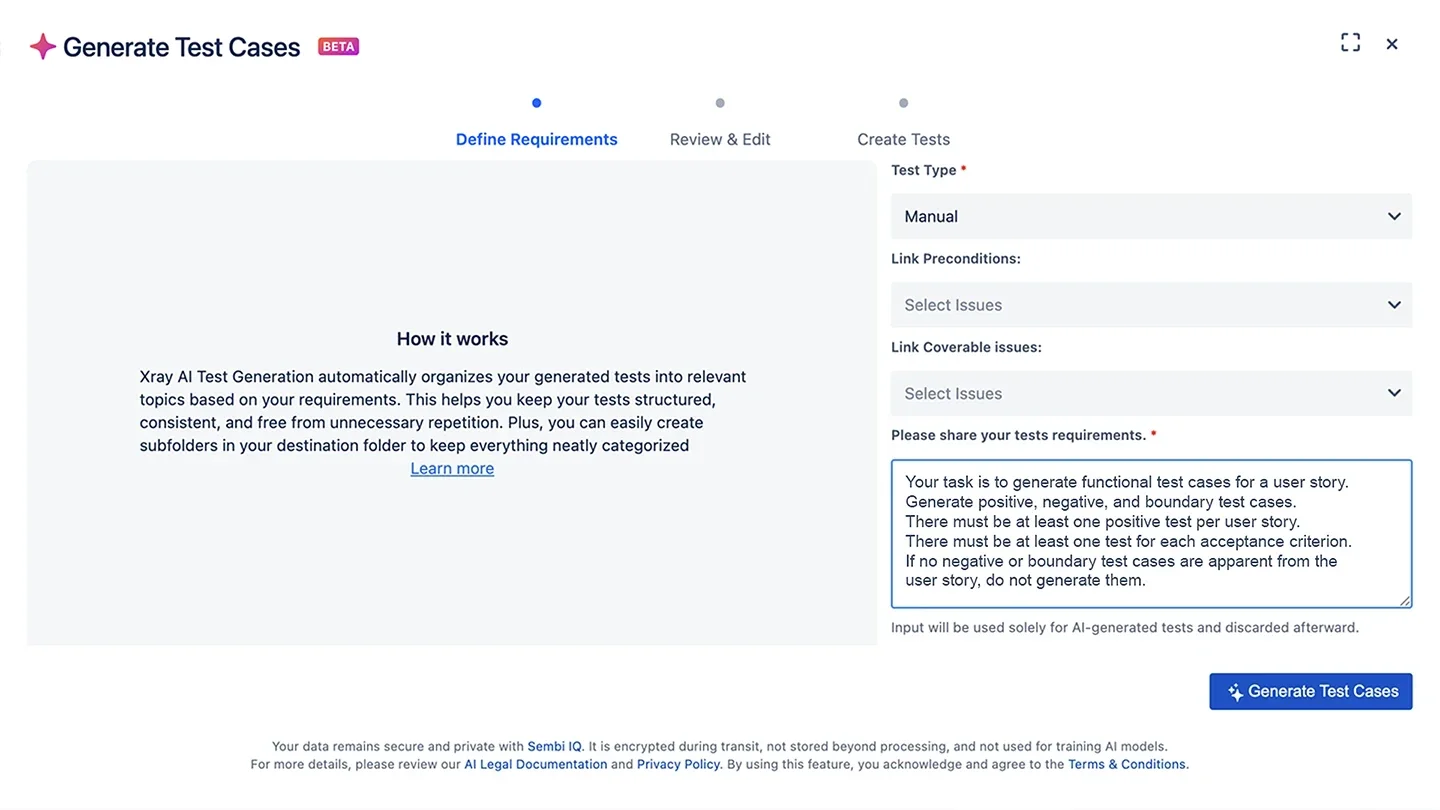

When Xray released its AI Test Case Generator, it arrived quietly as part of a standard product update. It simply appeared inside the test management suite we were already using day-to-day.

This pushed it away from the positioning as “introducing an AI tool”; it was introduced as any other enhancement to an approved test tool. Our challenge was to assess whether it genuinely improves the way we work. To do that, we needed to understand what it does, trial it in real scenarios and identify the processes we would need to create or amend.

This approach proved important, not just from a delivery perspective but in how confidence is built with clients.

Inputs used

To evaluate the feature meaningfully, we selected a set of typical Jira user stories. Stories we would normally design tests for, complete with standard acceptance criteria and varying levels of detail.

Using these as a starting point, we generated test scenarios through the Test Case generator. This allowed us to see how the feature interpreted real project inputs and how closely the output aligned with expected system behaviour and business intent.

Figure 1: Xray AI Test Case Generator and prompt.

Observations

The AI module generated test scenarios quickly, covering multiple paths for each user story. Speeding up the early stage of test design, where effort is usually spent creating the initial test structure.

What stood out was not just the speed; having a set of drafted scenarios changed how the work was approached. Testers moved more rapidly to reviewing logic, verifying intent and confirming coverage.

The test role shifted from drafting to assessing quality, relevance, coverage and risk mitigation.

Maintaining oversight

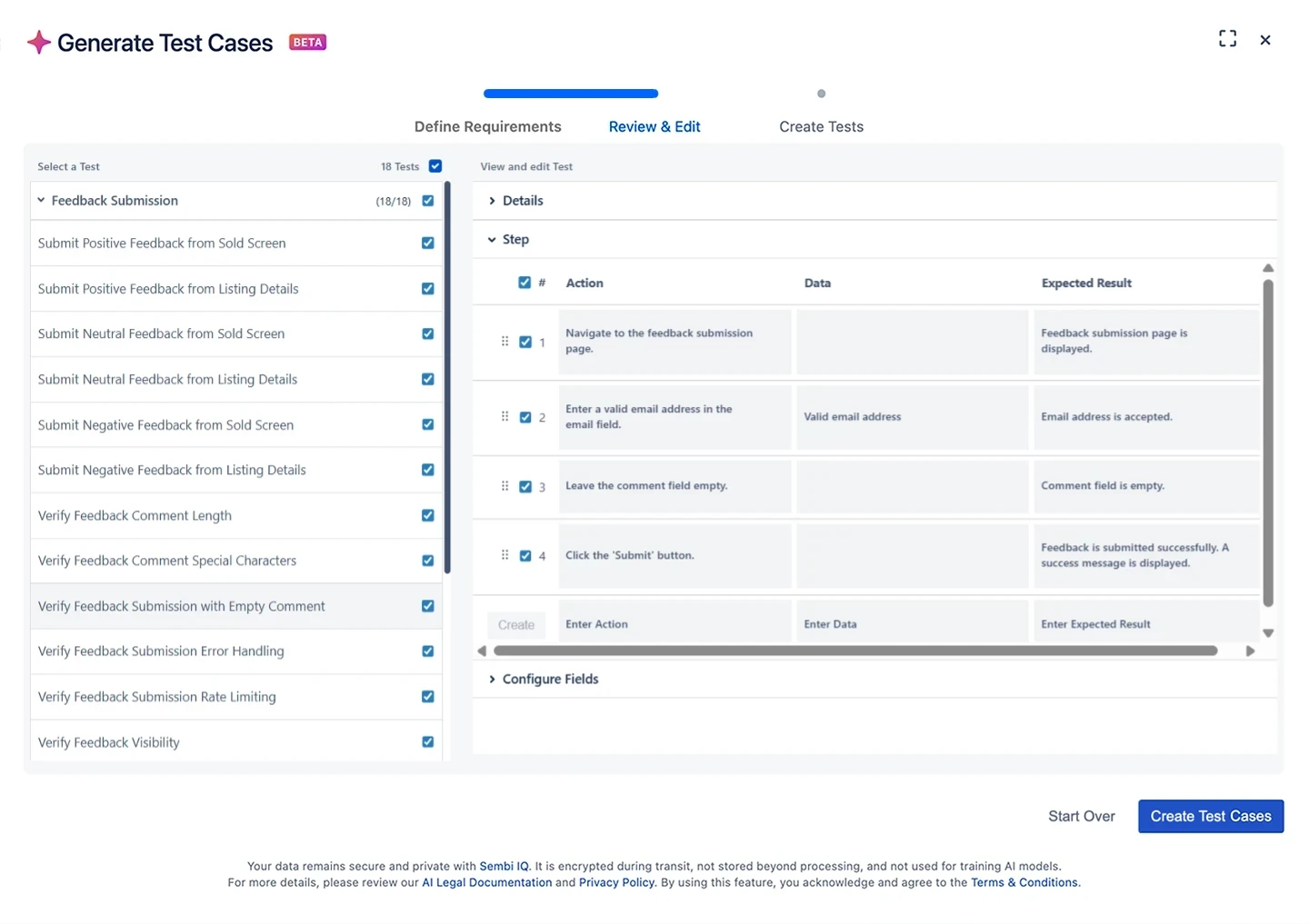

The generated tests provided a strong starting point, but they still, critically, required expert human validation. While the tool was effective at producing a broad set of scenarios, some outputs were incomplete, overly generic or misaligned with the nuances of our expected behaviour. In several cases, it introduced edge cases that weren’t relevant, while overlooking domain‑specific conditions that were essential. As a result, each scenario needed to be reviewed to confirm its accuracy, relevance and overall value before it could be incorporated into our test suite.

We introduced a simple “Reviewed” label in order to tag, track and report on the tests the testers reviewed. This helped distinguish between generated content and validated content and ensured that AI-assisted tests were held to the same quality bar as those wholly created by professional testers.

Figure 2: Reviewing and confirming AI-generated test cases before approval.

Process fit

One of the most valuable aspects of this module is that the capability lives entirely within Xray. The generated tests were formatted as any standard Xray test case; they could be edited, organised, linked for traceability and executed using existing workflows, whether manual or BDD-based.

There was no need to change roles, processes, or surrounding tooling. From a governance perspective, this significantly lowered the barrier to exploration and implementation for Test. It also made conversations with stakeholders simpler, as the new capability is an enhancement to an already approved test management tool, rather than introducing a new AI product.

As with any such capability, its use remains subject to appropriate security and privacy review, aligned with each client’s policies.

Insights from the evaluation

Exploring Xray’s AI Test Case Generator clarified where this capability fits within a modern testing workflow. It adds value at the beginning of test design, supports more focused review conversations and encourages earlier thinking about behaviour and coverage.

It showed that AI can be introduced in a way that remains familiar while operating within clear, well‑defined controls.

Potential areas of benefit

From our trial, a few areas stood out as having practical impact:

- accelerating initial test coverage

- improving consistency when stories are clearly defined

- supporting earlier conversations around behaviour and expectations

Areas for future use

This exploration was not about adopting an AI trend. It was about understanding a new capability as it became available within a trusted toolset and assessing how it could enhance an existing test design process.

- Try it: Engage directly with the tool’s AI generation features using real-world scenarios.

- Observe it: Meticulously document the types of test cases generated, their relevance, and the speed of output.

- Understand it: Analyse the underlying logic and constraints of the AI, identifying its strengths and limitations in the context of our complex testing requirements.

The journey was simple; try it, observe it and understand it. Through that, we gained a clearer view of how AI can support QA work in a grounded, practical and confidence-building way. This exploration of Xray’s new AI Test Case Generator was fundamentally rooted in pragmatic assessment, rather than a mere embrace of a fleeting technology trend. Our objective was clear: to thoroughly understand this newly integrated capability within a familiar and trusted toolset, and to rigorously evaluate its potential to genuinely enhance and streamline our established test design process.

The methodology adopted for this investigation was intentionally simple and iterative:

By maintaining this focused, hands-on, and critical perspective throughout the journey, we successfully moved past the hype surrounding AI in QA. The outcome was a clearer, more grounded view of how this technology can tangibly support Quality Assurance work, not by replacing the skilled QA analyst, but by acting as an intelligent assistant. This approach has led to a practical, confidence-building understanding of AI’s role in test design, demonstrating its utility as a valuable enhancement – using AI to work smarter, not harder, truly embodying our value of human-centred excellence.